A/B Testing in 2026: Building Growth Through Smarter Experiments

For too long, A/B testing has been reduced to a cliché: “Should our button be red or green?” In reality, experimentation has always been about something much bigger; learning how customers behave and making decisions grounded in evidence, not opinion.

In 2026 & ahead, testing will surely become a discipline that separates teams who scale reliably from those who keep guessing. This article will walk you through:

- 1. What A/B testing really means (without the jargon)

- 2. How experimentation is evolving in 2026

- 3. Mistakes that hold teams back

- 4. Lessons from high-velocity testing cultures

- 5. How to embed testing into your company’s DNA

Why A/B Testing Matters

Numbers remind us why this practice is powerful:

- –> 77% of companies run A/B tests on their websites today【99firms】.

- –> 60% of businesses specifically test landing pages, where first impressions drive signups and sales【99firms】.

- –> Yet only 1 in 8 tests delivers a statistically significant improvement【99firms】. That means most tests fail to move the needle, either because of poor design, wrong metrics, or weak hypotheses.

- –> At scale, the impact compounds: Microsoft reported 10–25% revenue per search improvement from disciplined experimentation at Bing【99firms】.

- –> The A/B testing tools market is projected to grow at over 11% CAGR through 2030, reaching nearly USD 1 billion in value【vwo】【coursera】.

The conclusion: testing works, but only when done with rigor and vision.

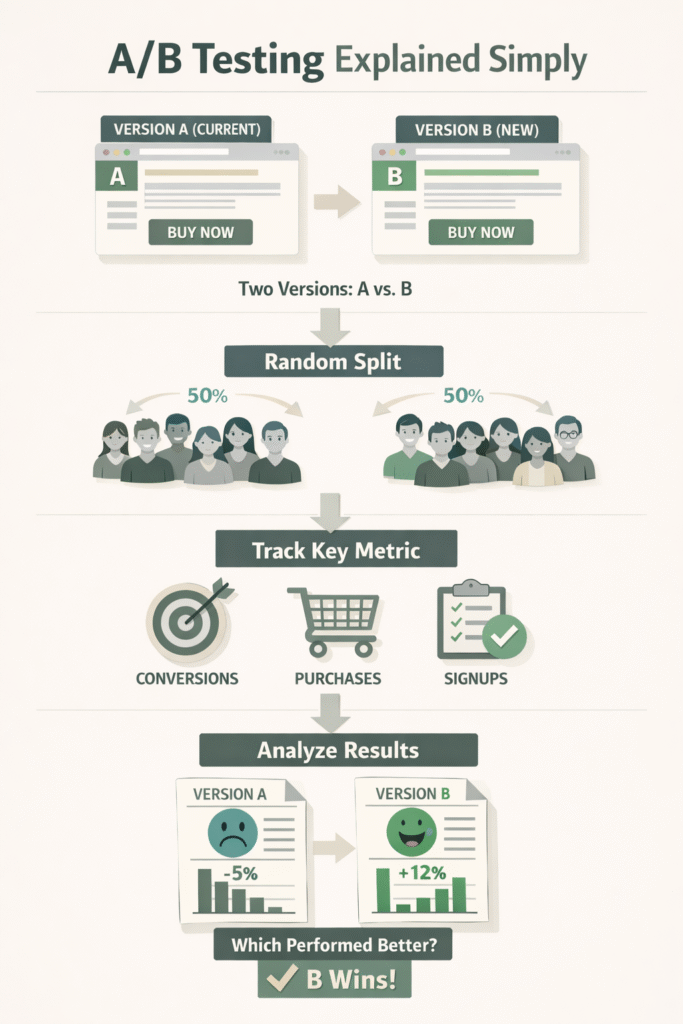

A/B Testing Explained Simply

At its core, A/B testing is straightforward:

- 1. You create two versions of something (A = current, B = new).

- 2.Visitors are randomly split between them.

- 3. You track a key metric: conversions, purchases, signups.

- 4. Once enough data is collected, you check which version performed better.

That’s it.

Imagine a local ice cream shop testing whether chocolate or vanilla sells more on weekends. Instead of guessing, they record sales over two weekends and see the numbers speak.

A/B testing applies the same logic to digital experiences: webpages, apps, emails, pricing models.

Extensions worth noting:

- 1. Multivariate testing: testing several changes at once (headline + image + button).

- 2. Journey testing: measuring not just clicks but full flows (e.g. onboarding or checkout).

- 3. Server-side testing: running experiments deeper in the stack, like recommendation algorithms or pricing logic.

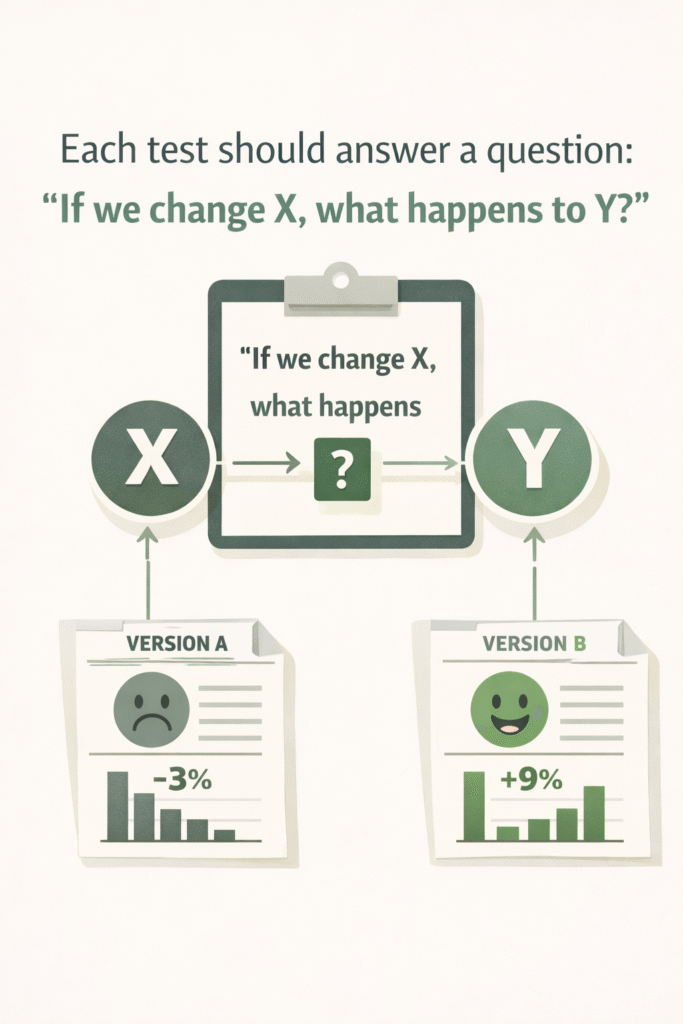

The real value lies in structured learning.

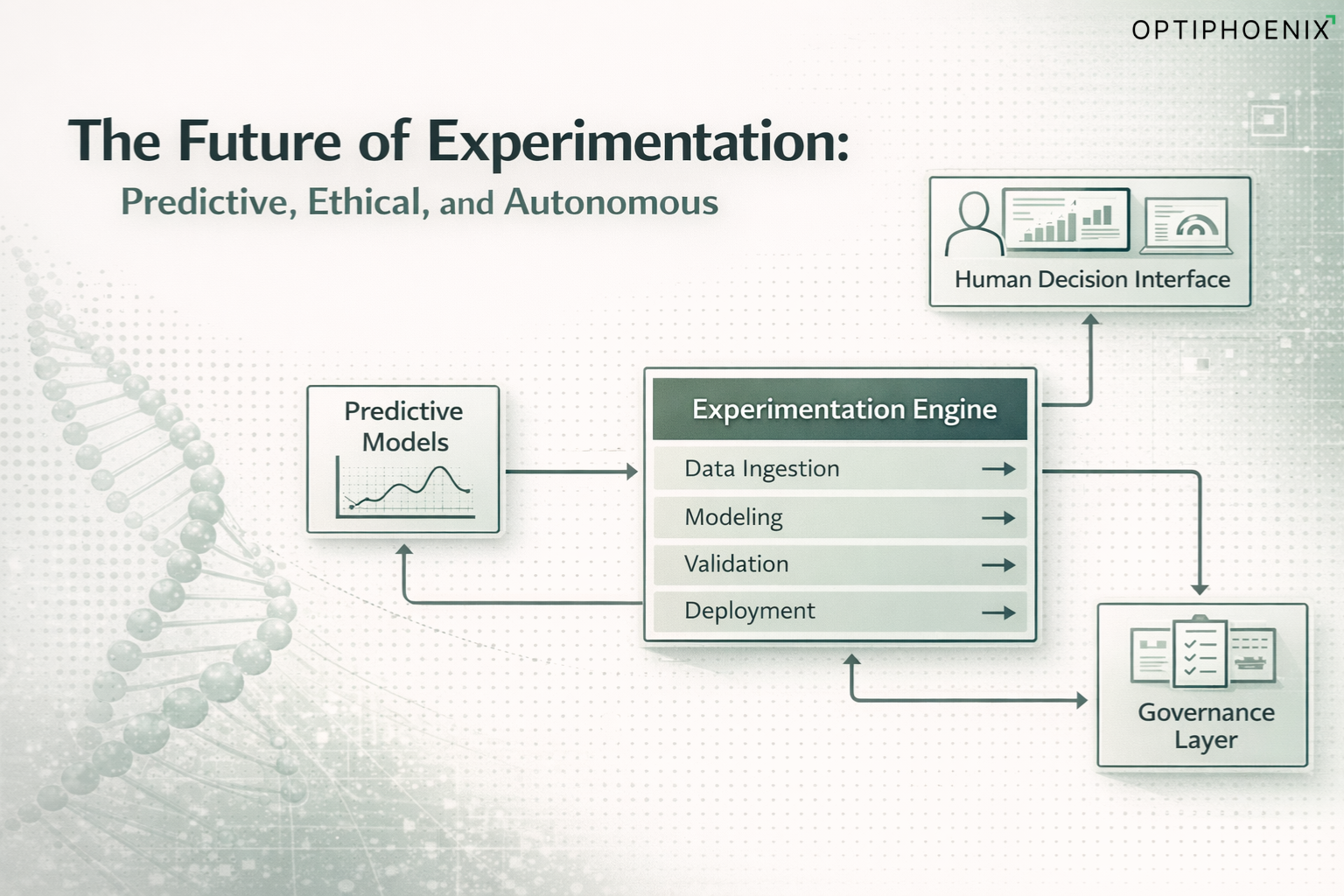

How Testing is Evolving in 2026

The principles remain, but the practice has matured. Here are shifts every leader should recognize:

1. Speed and Adaptiveness

Waiting weeks for answers no longer works. Teams use sequential and Bayesian methods to make reliable decisions faster. Some platforms automatically adjust traffic allocation to favor promising variants while still gathering evidence.

2. Testing Beyond Design

Experiments are no longer limited to colors and layouts. Today’s scope includes:

- Onboarding flows

- Checkout steps

- Pricing and bundling

- Recommendation engines

- Email sequences and timing

This broadens experimentation from “marketing tweak” to “business decision-making.”

3. Segment and Context Awareness

A global winner may not be a winner for everyone. For example:

- A variant that boosts conversions on desktop might fail on mobile.

- A checkout change could help new users but frustrate loyal ones.

In 2026, segment-specific insights will be essential.

4. Rigor in Analysis

Academic work stresses validating assumptions. In one 2025 paper, Jeunen et al. proposed repeated A/A tests to check for false positives before trusting results【arxiv】. Another framework balances multiple metrics (revenue, retention, cost) under constraints【arxiv】.

Testing is scientific.

5. Privacy and Data Shifts

With cookies disappearing and data regulations tightening, more companies run tests server-side, relying on first-party data. Privacy-preserving techniques are becoming standard.

6. Democratized Tools

Platforms now aim to let non-technical teams run safe experiments, while central teams ensure quality. This shift increases velocity but requires governance.

Common Mistakes (and How to Avoid Them)

Even experienced teams get this wrong. Here are mistakes I see most often:

- –> No hypothesis: Running a test “just to see what happens.”

- –> Stopping early: Ending tests after a few days, leading to false positives.

- –> Tiny sample sizes: Making decisions on unreliable data.

- –> Testing too many changes: Making attribution impossible.

- –> Focusing on vanity metrics: Prioritizing clicks over meaningful outcomes like revenue or retention.

- –> Ignoring segments: Applying results to all users when segments behave differently.

- –> Data integrity issues: Traffic misallocation or tracking errors.

- –> Siloed execution: Marketing, product, and design all testing separately without coordination.

- –> Forgetting follow-ups: Treating a winning variation as “final” instead of iterating further.

- –> Not documenting learnings: Failing to build a central knowledge base.

Each of these reduces credibility and wastes effort. Avoid them, and your testing program becomes a growth engine rather than a side project.

Lessons From High-Velocity Testing Cultures

Working with clients across industries, here are distilled lessons from teams that run testing at scale and speed:

Maintain a pipeline, not just a backlog

Always have 5–7 tests active across stages: design, live, analysis.

Run small pilots

Test on 5–10% of traffic first to catch issues before scaling.

Set guardrails

Define thresholds where a test should be stopped if it harms conversions or revenue too much.

Automate deployment

The easier it is to launch, the more experiments you’ll run.

Iterate winners

Each winning test becomes the new baseline. Build on it.

Work cross-functionally

Involve design, engineering, product, and analytics together.

Monitor actively

Use dashboards to track sample ratios, performance by segment, anomalies.

Learn from failures

A “losing” test disproves an assumption. That insight is just as valuable as a win.

Bundle where needed

Test interconnected flows (pricing + checkout + messaging) to avoid ripple surprises later.

Centralize knowledge

Maintain a registry of all experiments. Review quarterly.

The outcomes speak for themselves: companies adopting these practices typically see 3–5x more valid tests, higher win rates, and 10–20% uplift in core metrics (signups, purchases, retention) over a year.

Also Read: The Ultimate Guide to Conversion Rate Optimization in 2026: Strategies That Actually Work

What a Strong Testing Culture Looks Like

A testing culture doesn’t happen by accident. Here’s a model to aim for:

- 1. Awareness: Train teams on why testing matters.

- 2. Curiosity: Encourage anyone in the company to suggest test ideas.

- 3. Clear ownership: Define roles for design, build, analysis, and decision-making.

- 4. Alignment: Link test metrics directly to business KPIs.

- 5. Transparency: Share results openly: wins and losses.

- 6.Governance: A central team ensures quality, consistency, and prioritization.

- 7. Celebration: Reward insights gained, not just successful outcomes.

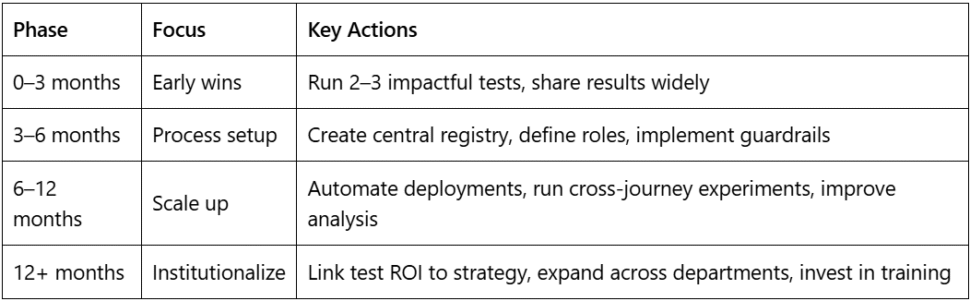

Sample Roadmap to Embed Testing

Final Thoughts

A/B testing in 2026 will be about disciplined learning, applied at scale, and tied to strategy. Leaders who invest in testing cultures don’t just get short-term lifts; they build engines of sustainable growth. If you’d like to see what this looks like in practice, explore our case studies →