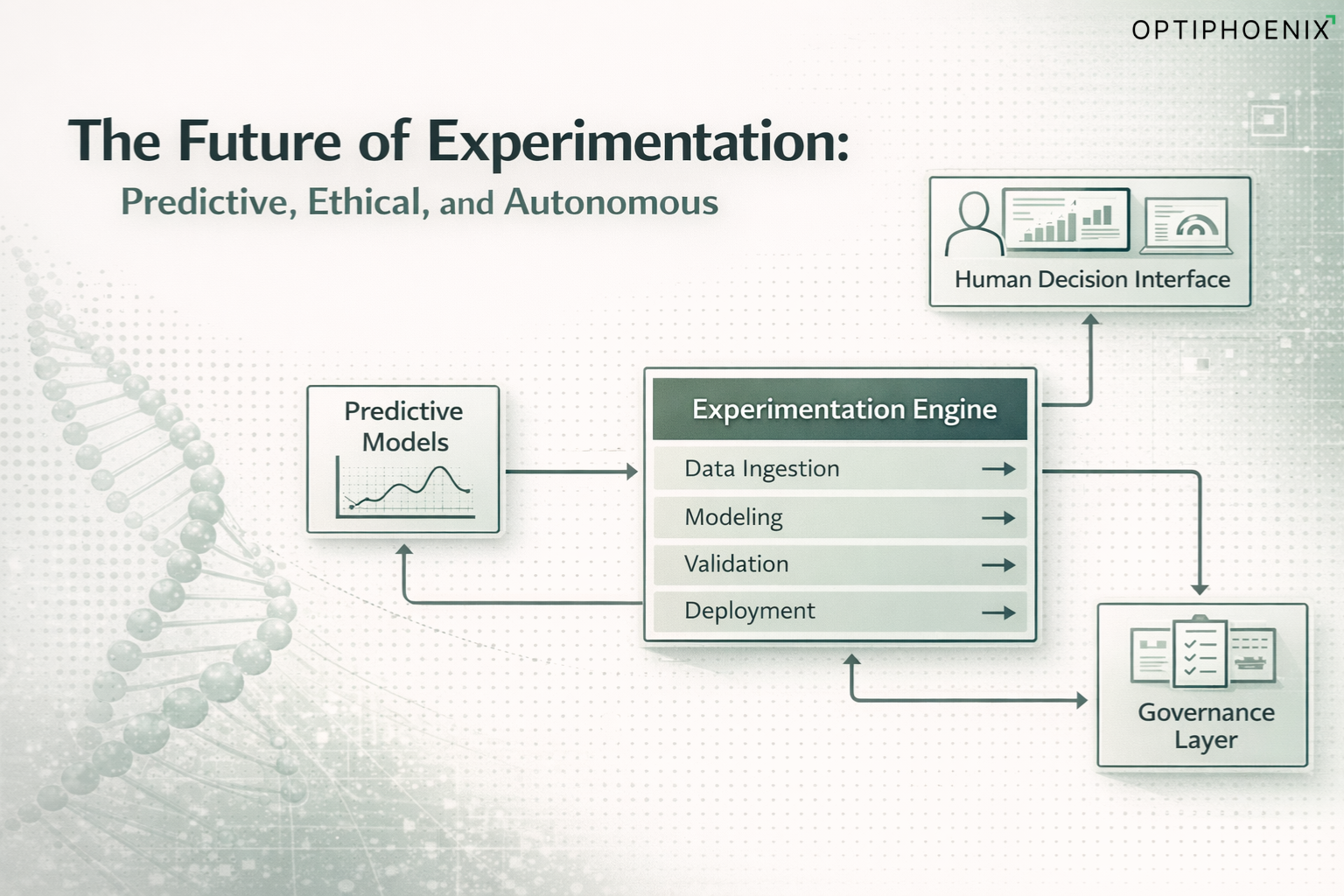

The Future of Experimentation: Predictive, Ethical, and Autonomous

Experimentation has crossed several eras.

The first era focused on execution. Teams shipped A/B tests, quantified outcomes, and celebrated lifts. It was a craft of operators. The second era introduced scale. Platforms made it easier to target, allocate, and measure. Companies ran more tests, yet most programs plateaued because they accumulated results rather than intelligence.

A new era is forming. This era values learning velocity over execution speed. It treats experimentation not as a set of tests but as a system that senses, predicts, governs, and learns. It integrates machine intelligence with human judgment to create compounding clarity.

Predictive modeling, autonomous experimentation, and ethical governance converge into a new architecture that moves the field forward. Experimentation becomes self-improving. It shifts from an activity to an intelligence layer.

This shift demands new skills, new governance, and new mental models. It also demands an architectural partner that understands how prediction, autonomy, and ethics must interplay to create a trustworthy system.

Table of Contents

1. The Shift from Test Execution to Learning Architecture

2. Predictive Modeling Becomes the New Foundation

3. Autonomous Experimentation: Powerful When Governed

3.3 Auditability and Explainability

4. Human-in-Loop Decisioning: Judgment Remains the Strategic Layer

5. Building the Modern Learning Architecture

5.1 Structured Insight Repositories

5.2 Evidence-Based Prioritization Frameworks

5.4 Cross-Functional Learning Loops

5.5 System Memory with Model Feedback

5.6 Governance Dashboard and Ethical Supervision

6. The Strategic Advantage of Learning Velocity

7. OptiPhoenix: Architecting Intelligent and Ethical Experimentation Systems

Frequently Asked Questions (FAQ)

1. How does predictive modeling change the way experimentation programs operate?

2. What does “learning velocity” mean in modern CRO, and why is it more important than test volume?

3. How does autonomous experimentation work without compromising revenue, privacy, or brand integrity?

4. Why is human-in-loop decisioning still necessary when models can predict and execute tests automatically?

5. How do experimentation systems evolve into “learning architectures”?

1. What is the future of CRO with AI?

2. How will predictive models improve experimentation accuracy?

3. Why is governance essential in autonomous testing?

4. What role do humans play in AI-driven experimentation?

5. How do organizations increase learning velocity in experimentation?

1. The Shift from Test Execution to Learning Architecture

Traditional experimentation treats tests as isolated events. Teams connect ideas to outcomes, celebrate a few winners, and move on. This produces pockets of improvement but not cumulative intelligence.

Learning architecture works differently.

It absorbs every experiment, every segment interaction, every behavioral pattern, and every failure. It converts this flow of evidence into a system that:

- Predicts where friction will appear

- Identifies which hypotheses deserve attention

- Reuses past insights to shape future experiments

- Aligns testing with long-range strategy

- Elevates the organization’s ability to interpret behavior

The key transition is conceptual. Execution focuses on outputs. Learning architecture focuses on understanding.

Learning velocity becomes the decisive metric.

A fast learner compounds advantage faster than a fast executor.

Companies that master this architecture build an intelligence flywheel. Their decisions improve because their understanding sharpens. Their experiments perform better because their hypotheses mature. Their teams align faster because evidence becomes structured.

Execution remains important, but it no longer defines the program. The strength lies in memory, governance, and predictive capability.

2. Predictive Modeling Becomes the New Foundation

As digital ecosystems expand, behavior fragments across touchpoints, and experimentation workloads grow. Manual hypothesis selection cannot keep pace. Predictive modeling steps in to reorganize how teams prioritize, simulate, and target.

Three predictive capabilities anchor the future.

2.1 Hypothesis Scoring

Each organization generates hundreds of ideas annually. Most are low-impact. Some are redundant. A few have high potential but remain invisible under the operational load.

Prediction models solve this problem by analyzing:

- Historical test wins and losses

- Behavioral clusters across sessions

- UX heuristics and friction signals

- Product seasonality and demand curves

- Copy, layout, and functional attributes of past winners

The model ranks hypotheses by expected lift probability and expected business impact.

This reframes the experiment backlog. Teams allocate energy where the likelihood of learning and impact is highest. Prediction becomes a strategic filter.

It does not replace the human ability to propose ideas. It enhances the discernment needed to decide which ideas matter.

2.2 Outcome Simulation

Before an experiment launches, the model simulates directional outcomes. These simulations project:

- Which audience groups might respond differently

- How downstream revenue or retention could shift

- Whether the idea involves risk-heavy trade-offs

- Whether sample size requirements justify deployment

Simulations do not guarantee accuracy. They provide foresight.

That foresight reduces waste. It prevents engineering cycles from being spent on ideas with low learning value.

Outcome simulation turns experimentation into a more capital-efficient practice.

2.3 Dynamic Targeting

User behavior diverges sharply during acquisition, product exploration, and purchase. Segments behave with different intent, different sensitivities, and different time horizons.

Dynamic targeting predicts which variation suits which cohort. The system routes experiences with governed flexibility. These predictions rely on:

- Real-time behavioral scoring

- Intent estimation

- Past-tested segment effect sizes

- Safety constraints on traffic

This enables micro-level experimentation without losing control at the macro level.

Dynamic targeting increases precision without sacrificing stability.

3. Autonomous Experimentation: Powerful When Governed

Automation in experimentation is inevitable. Traffic allocation, variant rollout, anomaly detection, and routine test deployment are mechanical processes. Machine-driven execution will accelerate timelines and reduce operational burden.

Yet autonomy without governance is hazardous. Experimentation touches revenue, user trust, privacy, accessibility, and brand voice. Any system with autonomous powers must operate within defined boundaries.

The future of autonomous experimentation relies on three governance constructs.

3.1 Bounded Autonomy

Bounded autonomy defines where the system can act independently. It includes:

- Low-risk UI adjustments

- Micro-copy variations

- Minor targeting optimizations

- Traffic allocation shifts within safe ranges

- Sequential testing on non-critical flows

High-risk zones such as checkout, pricing, authentication, and high-value dashboards require human review and sign-off.

The system gains speed. Humans retain strategic control.

3.2 Safety Layers

Safety layers ensure experimentation never compromises user experience or business integrity. They include:

- Revenue floors to prevent downside risk

- Accessibility validations to protect inclusivity

- Privacy filtering on sensitive attributes

- Dataset lineage checks

- Model confidence thresholds

- Automatic rollback if anomalies appear

Safety transforms autonomy from operational automation to trusted infrastructure.

3.3 Auditability and Explainability

Every autonomous action must be transparent. Each test, algorithmic decision, and traffic shift is logged with:

- Reasoning metadata

- Model version

- Dataset inputs

- Guardrail status

- Comparative outcomes

This audit trail makes the system debug-friendly, compliant-ready, and accountable.

Auditability reinforces trust. Without it, autonomous experimentation is unacceptable at scale.

4. Human-in-Loop Decisioning: Judgment Remains the Strategic Layer

As prediction and autonomy mature, the human role becomes more selective yet more important.

Humans supply strategic judgment, ethical control, and narrative interpretation. Machines optimize for signals. Humans optimize for purpose.

Three human responsibilities shape high-quality experimentation systems.

4.1 Strategic Arbitration

Prediction helps prioritize. Simulation helps forecast. Autonomy helps execute. Yet the final decision on which problems deserve attention belongs to leadership.

Humans decide:

- Which experiments align with long-range vision

- Which segments deserve protection

- Which ideas influence brand trajectory

- Which insights feed product roadmaps

The system proposes. Humans arbitrate.

4.2 Ethical Oversight

Autonomous experimentation touches boundaries of fairness and trust. Human oversight defines:

- Prohibited test categories

- Sensitive attribute restrictions

- Maximum risk tolerance

- Acceptable trade-offs in attention, friction, or personalization

Ethical boundaries cannot be delegated to models. They require principled human reasoning.

4.3 Narrative Building

Experimentation outputs numbers. Decision-making demands narrative. Humans translate insights into strategy by interpreting:

- Patterns across experiments

- Behavioral shifts over time

- Context behind anomalies

- Implications for product, marketing, and CX

A learning architecture only becomes useful when someone converts data into meaning.

Humans remain essential because interpretation sits at the core of learning velocity.

5. Building the Modern Learning Architecture

A modern experimentation system does not end with test cycles. It builds institutional memory. It forms structural clarity that compounds over time.

A fully mature learning architecture includes six components.

5.1 Structured Insight Repositories

Instead of static reports, insights are captured as structured objects. Each object includes:

- Hypothesis

- Variation logic

- Traffic behavior

- Segment learnings

- Effect sizes

- Qualitative friction notes

- Model simulations

- Final interpretation

This transforms learning into reusable intelligence.

5.2 Evidence-Based Prioritization Frameworks

With structured repositories, prioritization improves. Decisions reflect:

- Historical evidence

- Predictive scores

- Cross-experiment relationships

- Organizational goals

- Seasonal patterns

Prioritization becomes algorithmically informed and strategically guided.

5.3 Behavioral Signal Graphs

As experiments increase, behavioral signals interconnect. A signal graph shows:

- Which UX changes influence which metrics

- Where friction concentrates

- How upstream actions impact downstream revenue

- How new users differ from returning users

- Which device classes produce variance

Signal graphs create a living model of user behavior.

5.4 Cross-Functional Learning Loops

The system must teach. Product, engineering, marketing, and leadership require clarity at their level.

Learning loops ensure insights reach the right teams in formats that encourage action:

- Decision briefs for leadership

- UX patterns for design

- Segment behavior nodes for marketing

- Model drift reports for engineering

Learning becomes a cross-functional commodity.

5.5 System Memory with Model Feedback

Experiments refine models. Models shape experiments. This feedback loop generates compounding accuracy.

Each experiment updates:

- Hypothesis scoring models

- Targeting algorithms

- Simulation engines

- Behavioral clusters

Every cycle improves predictive capability.

5.6 Governance Dashboard and Ethical Supervision

To make autonomy safe and predictable, organizations need control centers that surface:

- Model confidence

- Privacy risks

- Traffic movements

- Anomalies

- Violations of ethical rules

- Transparency reports

Governance is the stabilizing force that allows automation to scale responsibly.

6. The Strategic Advantage of Learning Velocity

Learning velocity determines competitive advantage in complex markets. It measures how quickly an organization can:

- Sense behavioral changes

- Interpret signals

- Test hypotheses

- Absorb insights

- Scale improvements

- Strengthen models

A company with high learning velocity reacts faster, adapts faster, and compounds clarity faster.

Speed is temporary. Learning velocity is durable.

When predictive modeling, autonomy, and governance merge into a coherent architecture, experimentation becomes a continuously improving intelligence layer. This architecture creates advantages that competitors find difficult to replicate.

The organizations that succeed in 2026 and beyond will not be the ones that test the most. They will be the ones that learn the fastest.

7. OptiPhoenix: Architecting Intelligent and Ethical Experimentation Systems

OptiPhoenix stands at the center of this evolution.

Our role is to architect systems that combine prediction, governance, autonomy, and strategic judgment into a unified learning architecture.

Our approach emphasizes:

- Learning velocity as the principal metric

- Ethical constraints as foundational, not optional

- Predictive clarity for every hypothesis

- Autonomous execution with strict guardrails

- Memory systems that retain intelligence for years

- Human-in-loop decisioning for context and direction

- Narrative clarity that aligns the organization

Read more: How AI Is going to redefine CRO in 2026

Experimentation becomes a cognitive system.

The system senses, interprets, remembers, and improves.

OptiPhoenix builds that system with precision and long-term discipline.

Organizations that adopt this architecture unlock clarity that compounds.

They gain the ability to understand users with depth, adapt with speed, and scale insights with accuracy.

This is the future of experimentation. Predictive. Ethical. Autonomous.

A future shaped not by test volume but by learning velocity.

Frequently Asked Questions (FAQ’s)

1. How does predictive modeling change the way experimentation programs operate?

Predictive modeling elevates experimentation from reactive testing to proactive decision systems. Instead of relying on intuition or scattered heuristics, models analyze historical experiments, behavioral clusters, UX attributes, and segment variance to score hypotheses and estimate potential outcomes. This reduces the number of low-value tests, increases learning density, and aligns experimentation efforts with long-range strategy. Predictive modeling becomes the foundation for prioritization, simulation, and audience targeting across markets and geographies.

2. What does “learning velocity” mean in modern CRO, and why is it more important than test volume?

Learning velocity measures the rate at which an organization converts user behavior into structured insight and institutional knowledge. It reflects how quickly teams sense behavioral change, test meaningful hypotheses, interpret outcomes, and update decision models. Test volume can inflate activity without increasing understanding. Learning velocity compounds the advantage. Organizations with high learning velocity outperform because they identify friction earlier, adapt product direction faster, and retain intelligence even as teams evolve.

3. How does autonomous experimentation work without compromising revenue, privacy, or brand integrity?

Autonomous experimentation functions within a governance structure that defines clear boundaries. Systems receive autonomy only in low-risk flows and operate under revenue floors, privacy filters, accessibility checks, and anomaly detection layers. Every autonomous action—traffic allocation, variation rollout, targeting refinement—is logged with reasoning metadata and version control. This ensures transparency, accountability, and ethical compliance. Autonomy accelerates execution, while governance preserves user trust and business stability.

4. Why is human-in-loop decisioning still necessary when models can predict and execute tests automatically?

Models excel at pattern recognition and operational speed, but they lack contextual judgment. Humans remain responsible for strategic arbitration, ethical constraints, brand protection, and narrative interpretation. Human-in-loop decisioning ensures that experiments reinforce long-term direction rather than short-term lifts, respect ethical trade-offs, and reflect product vision across global markets. AI optimizes for signals. Humans optimize for meaning. This relationship produces responsible autonomy.

5. How do experimentation systems evolve into “learning architectures”?

A learning architecture integrates predictive models, autonomous execution, structured repositories, behavioral signal graphs, and governance dashboards. It captures insights as reusable objects, simulates outcomes before deployment, updates models after each experiment, and surfaces learnings across functions. The system senses, interprets, remembers, and adapts. This evolution transforms experimentation from a tactical activity into a strategic intelligence layer that compounds clarity year after year.

People Also Ask

1. What is the future of CRO with AI?

CRO evolves into predictive and autonomous systems that prioritize ideas, simulate impact, and govern tests ethically. The focus shifts from launching tests to accelerating organizational learning.

2. How will predictive models improve experimentation accuracy?

Predictive models evaluate historical results, behavioral data, and UX patterns to forecast lift probability and segment responsiveness, reducing wasted tests and improving experiment quality.

3. Why is governance essential in autonomous testing?

Governance creates safety boundaries. It enforces revenue protection, ethical constraints, privacy compliance, and auditability so autonomous experiments operate within controlled risk.

4. What role do humans play in AI-driven experimentation?

Humans guide strategy, ethics, brand choices, and narrative interpretation. AI handles execution. Human oversight ensures experiments align with long-term objectives.

5. How do organizations increase learning velocity in experimentation?

Teams raise learning velocity by using predictive scoring, structured insight systems, simulation models, and feedback loops that refine hypotheses and upgrade decision-making speed.