How AI Is going to redefine CRO in 2026

Over the last two years, AI has quietly moved from experimentation support to experimentation infrastructure.

It now influences how hypotheses are formed, how tests are prioritized, and how insights are reused.

The result is a new kind of CRO program, one that doesn’t just optimize user flows but systematizes learning.

This shift isn’t about adding another layer of automation.

It’s about reengineering how growth teams think, test, and decide, across design, engineering, and marketing.

Table of Content

The Strategic Case: What the Numbers Say

From “Ran a Test” to “Run a System”

- Predictive Experimentation (anticipate, don’t react)

- Micro-Adaptation (from segments to moments)

- AI-Assisted UX (design evidence over design opinion)

- Autonomous Experimentation (velocity → value)

- Ethical Personalization (trust is a conversion metric)

Four Failure Modes (and How to Avoid Them)

The Executive Playbook: AI x CRO as Operating System

- Pillar 1: Governance & Safety Nets

- Pillar 2: North-Star Metrics (beyond CTR)

- Pillar 3: Predictive UX & Micro-Adaptation

- Pillar 4: Speed Budget & Performance Guardrails

- Pillar 5: Autonomous Experimentation with Hypothesis Control

- Pillar 6: Insight Library & Reuse

What “Maturity” Looks Like in 2026

Field Notes: Where We See the Fastest Wins

Closing Perspective: What the Best Teams Do Differently

- What is predictive experimentation in CRO?

- How do autonomous testing platforms affect governance & risk?

- What metrics should CEOs/CMOs track beyond CTR?

- How does ethical personalization improve conversions and margins?

- How is AI reshaping experiment design and developer workflows?

Executive Hook

Problem. Most brands have adopted “AI” in pockets: copy tools, basic personalization, ad automation. Yet cart abandonment still hovers ~70%, checkout friction persists, and experimentation is more noise than knowledge. (Baymard Institute)

Agitation. The gap isn’t the absence of tests—it’s the absence of learning systems. Teams chase test velocity, ship more variants, and drown in inconclusive dashboards. Meanwhile, buyers in 2026 reward brands that are transparent, trustworthy, and context-aware, not merely faster.

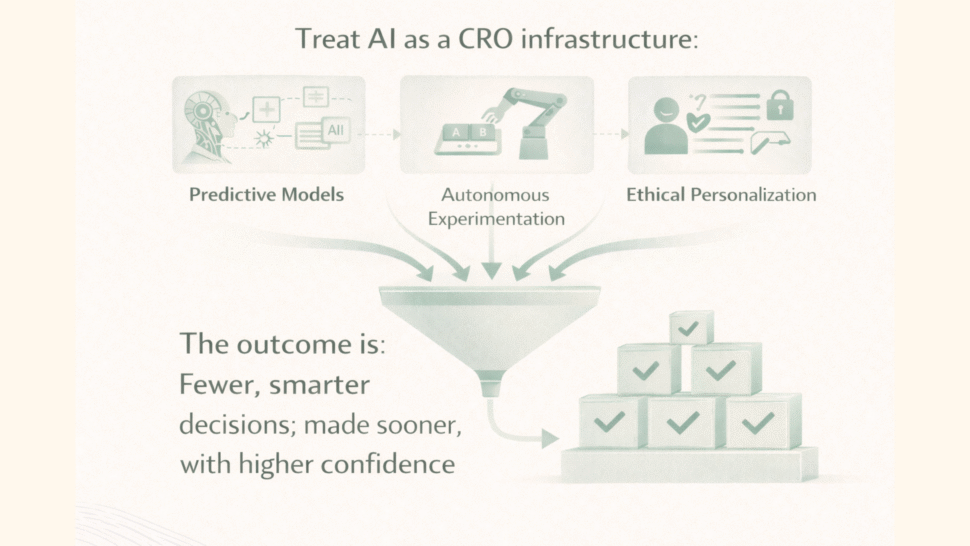

Solution. Treat AI as a CRO infrastructure: predictive models that anticipate friction, autonomous experimentation that learns continuously, and ethical personalization that earns consent. The outcome is fewer, smarter decisions; made sooner, with higher confidence.

The Strategic Case: What the Numbers Say

- Personalization impact (done right). McKinsey’s multi-industry research shows personalization typically drives 5–15% revenue lift (and up to 10–30% marketing ROI gains) when executed with maturity. (McKinsey & Company)

- Friction is expensive. The average cart abandonment rate ~70% remains a global norm—both a warning and a roadmap for value creation. (Baymard Institute)

- Speed matters, but only to a point. On mobile, a 0.1s speed improvement lifted conversions by 8–10% in Google’s data; case syntheses report sensitivity even at the 100ms level. Speed is table stakes; interpretation creates edge. (Think with Google)

- 2026 reality check. Analysts forecast heavy scrutiny on AI ROI and governance. Forrester and Gartner both signal a pivot from hype to hard outcomes and controlled agentic deployments; many “agent” projects will be trimmed for lack of value. (Forrester)

Implication for leaders: The advantage in 2026 is not adoption; its operationalization, turning AI into a governed, measurable, and human-centered conversion system.

From “Ran a Test” to “Run a System”

Traditional CRO asks, “Which version won?” AI-enabled CRO asks, “What will work next—and why?”

1) Predictive Experimentation (anticipate, don’t react)

Modern models observe micro-interactions (hover, scroll cadence, hesitation near CTAs) and predict drop-offs before they happen, allowing smart re-routing of journeys in real time. We see this direction in leading stacks where checkout flows adapt pre-emptively based on risk signals. It shifts the team’s time from post-hoc analysis to upstream prevention. (Think: reinforcement learning tuned for conversion goals.)

Executive value: fewer wasted sessions, faster clarity on where to intervene.

2) Micro-Adaptation (from segments to moments)

Personalization is no longer “first-time vs. returning.” It’s per-session micro-adaptation: reordering PDP sections, rewriting microcopy, or elevating reassurance modules, all contextually. Done properly, this compounds the revenue lifts observed in the research because you’re aligning with intent in the moment. (McKinsey & Company)

3) AI-Assisted UX (design evidence over design opinion)

Computer-vision and pattern models synthesize gaze flow, saliency, and sentiment to suggest evidence-based layout changes. Your teams debate less and validate more. This is where “design intuition” evolves into “design instrumentation.”

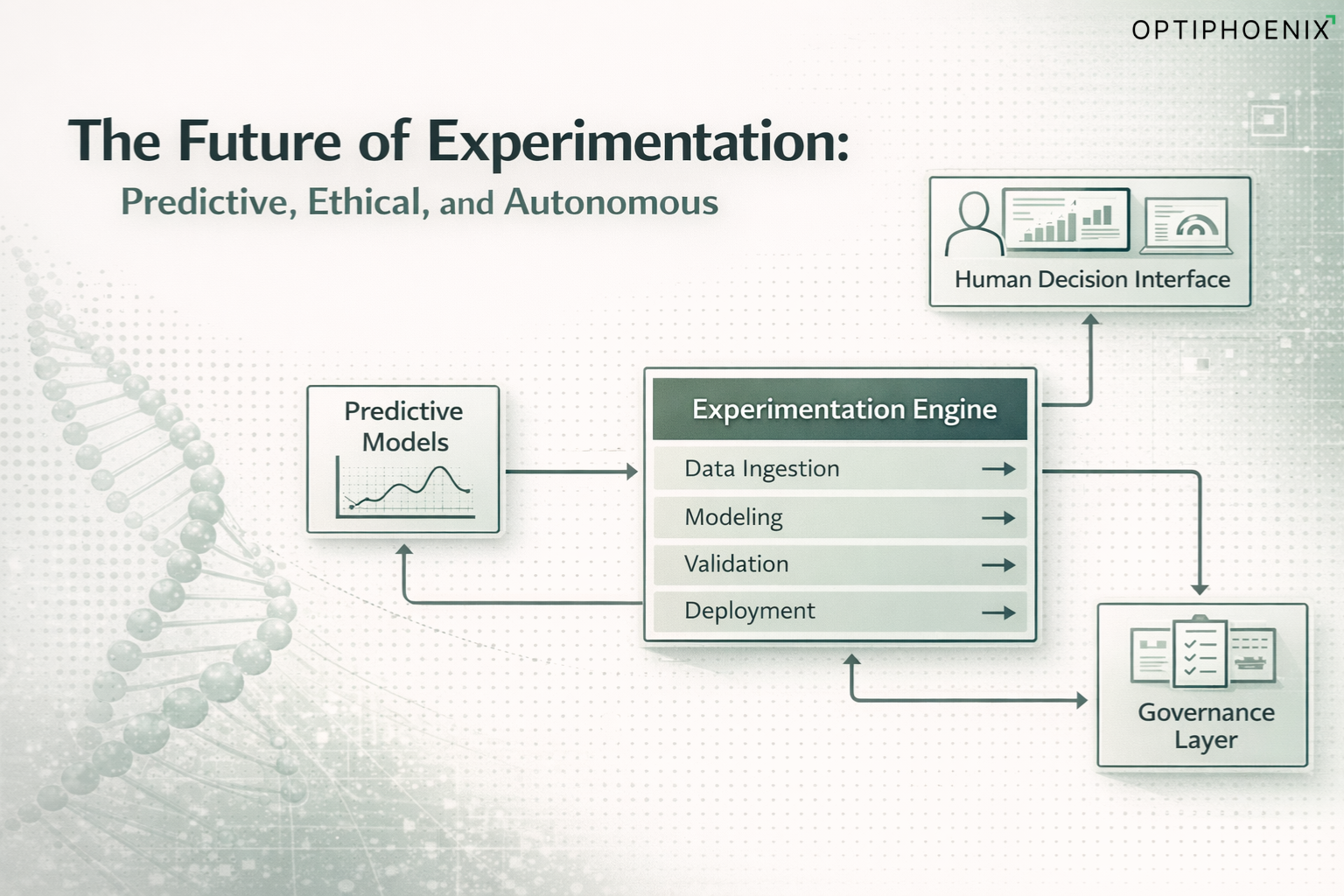

4) Autonomous Experimentation (velocity → value)

The newer class of platforms run continuous micro-tests: generate variants, allocate traffic, retire losers – on loop – against a declared objective (e.g., “+5% checkout completion”). Velocity increases 5–6x, and time-to-insight compresses from weeks to hours when governance is in place. (Forrester)

Caveat: autonomy is powerful only if hypotheses, guardrails, and success metrics are explicit. Otherwise you optimize for noise.

5) Ethical Personalization (trust is a conversion metric)

Privacy-first design with zero-party data and intent transparency isn’t a legal checkbox; it’s a conversion accelerator. In 2026, buyers reward explainability—why you’re showing a recommendation and how they control it. McKinsey’s 2025 work on targeted promotions shows small, responsible optimizations (1–2% sales lift; 1–3% margin improvement) beating blunt-force discounting. (McKinsey & Company)

Four Failure Modes (and How to Avoid Them)

- The Velocity Trap

- Symptom: Dozens of tests, shallow learnings, inconclusive lifts.

- Fix: Shift to learning KPIs: % hypotheses validated, reuse rate of insights, decision latency.

- Symptom: Dozens of tests, shallow learnings, inconclusive lifts.

- The Proxy Problem

- Symptom: Teams optimize CTR, not contribution margin; pageviews, not purchase confidence.

- Fix: Tie optimization to economic outcomes (RPS, contribution per session, LTV) and confidence metrics (size certainty, delivery clarity).

- Symptom: Teams optimize CTR, not contribution margin; pageviews, not purchase confidence.

- The Labyrinth of Offers

- Symptom: Overlapping promotions increase AOV on paper while eroding margin and confusing buyers.

- Fix: Treat pricing and offers as behavioral experiments; prefer choice architecture that minimizes cognitive load. (Evidence: simplifying decisions typically outperforms complex bundles.)

- Symptom: Overlapping promotions increase AOV on paper while eroding margin and confusing buyers.

- Agent Washing

- Symptom: Rebranding chatbots as “agents,” no autonomy or ROI.

- Fix: Demand capabilities proof: multi-step goal execution, safe rollback, measurable delta vs. baselines. Analysts expect many agentic projects to be cut without this discipline. (Reuters)

- Symptom: Rebranding chatbots as “agents,” no autonomy or ROI.

The Executive Playbook: AI x CRO as Operating System

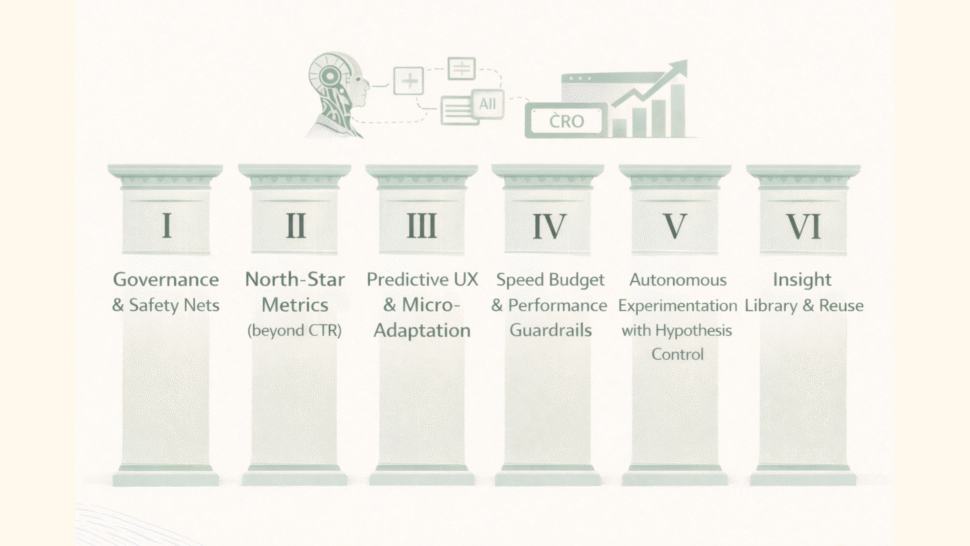

Below is a governed, 6-pillar framework we implement with enterprise and high-growth brands. It’s engineered to create reliable conversion lift while building a repeatable learning asset.

Pillar 1: Governance & Safety Nets

- Access control & rollback: pre-approved deployment lanes, instant disable paths.

- Risk catalog: what can/can’t be touched autonomously (e.g., payments, inventory promises).

- Model accountability: designate human owners for hypotheses, ethics, and outcome interpretation.

Why it matters: Analyst houses flag governance as the gating factor to AI ROI in 2026. (Forrester)

Pillar 2: North-Star Metrics (beyond CTR)

- RPS (revenue per session), Checkout Completion, Net Margin Contribution, Confidence Lift (fit/delivery clarity).

- Learning velocity: time from observation → implemented improvement.

Why it matters: If you don’t measure decision quality, you’ll over-invest in test quantity.

Pillar 3: Predictive UX & Micro-Adaptation

- Activate models that forecast hesitation zones (e.g., size selector, payment step).

- Pre-position reassurance: delivery windows, returns, safety badges where gaze peaks, above the fold, near the gallery.

Why it matters: The 70% abandonment datapoint is a map: remove uncertainty where it starts, not where https://optiphoenix.com/https://optiphoenix.com/it ends. (Baymard Institute)

Pillar 4: Speed Budget & Performance Guardrails

- Set speed SLOs (e.g., LCP < 2.5s; TTI < 3s) and automate enforcement.

- Treat 100ms deltas seriously; they move conversion. (Think with Google)

Why it matters: AI-generated experiences are useless if they load slowly.

Pillar 5: Autonomous Experimentation with Hypothesis Control

- Use autonomy for micro-tests; keep macro changes human-stewarded.

- Encode hypotheses and stop-loss rules into the platform.

- Require counterfactual logging to quantify model impact.

Pillar 6: Insight Library & Reuse

- Archive every test (goal, context, variant, outcome, “why”).

- Build playbooks by journey zone (PDP, cart, checkout, post-purchase).

- Make reusability a KPI: a learning is valuable only when it travels.

What “Maturity” Looks Like in 2026

Level 1: Tactical

- Separate teams, ad-hoc tests, speed focus, weak linking to economics.

Level 2: Programmatic

- Quarterly roadmaps, mixed methods research, micro-adaptation in PDP/checkout, basic autonomy under guardrails.

Level 3: Systemic

- CRO acts as a conversion intelligence OS: predictive UX, governed autonomy, privacy-first personalization, and a living insight library.

- Success is measured by decision half-life (how fast your org updates belief), confidence metrics, and margin contribution, not just “wins.”

Field Notes: Where We See the Fastest Wins

- PDP confidence zones

Place fit, fabric, delivery/returns near the gallery and price block. It reduces decision lag and prevents back-and-forth behavior that inflates abandonment. - Choice architecture

Offers that eliminate middle options (e.g., two clear bundles rather than three similar ones) push AOV without cognitive tax. - Size & selection support

Interactive sizing + microcopy (“true to size / stretches”) plus visual exemplars help first-time buyers commit—and reduce returns. - Checkout simplification

Hide non-essential fields; progressive disclosure; default secure payment; predictable delivery windows. - Speed budget

Guardrails tied to real revenue deltas from speed research; instrumented in CI/CD so no release regresses Core Web Vitals. (Think with Google)

Ethics as a Growth Lever

- Explainability: Let users see why a recommendation appears; it increases acceptance and adds perceived fairness.

- Consent loops: Encourage users to co-create their preferences (think Sephora-style “voluntary mapping”); it leads to smarter relevance at lower discounts. (McKinsey & Company)

- Guardrails for agents: No autonomous edits to sensitive promises (pricing, delivery) without human validation. Analysts expect many “agentic” initiatives to be unwound for lack of value clarity—don’t be one of them. (Reuters)

Executive Checklist

- We’ve named accountable owners for AI hypotheses, ethics, and outcomes.

- North-star metrics = RPS, checkout completion, margin, confidence—not just CTR.

- Speed budget enforced in CI/CD; 100ms matters. (Think with Google)

- Predictive models flag hesitation zones; reassurance is visible where attention peaks.

- Autonomous testing runs under stop-loss and rollback rules.

- We maintain a living insight library; reuse rate is tracked.

- Personalization is consent-based and explainable; targeted promos are measured for margin, not just lift. (McKinsey & Company)

- Quarterly governance review: what changed, what we learned, how belief updated.

Closing Perspective: What the Best Teams Do Differently

The elite growth organizations we work with share three habits:

- They move at the speed of understanding, not the speed of release.

- They optimize for confidence, not clicks.

- They turn lessons into systems, so every experiment funds the next decision with more certainty.

AI is not your strategy. Learning is.

AI simply gives learning a nervous system: fast, predictive, and disciplined.

If you want 2026 to be your inflection year, don’t buy more tools.

Build the operating system where experimentation, data, and ethics work in concert to increase the one thing your buyers reward most: trust.

FAQs

1. What is predictive experimentation in CRO?

It’s the use of machine learning to forecast experiment outcomes before full rollout.

It helps teams skip low-impact ideas, allocate traffic smarter, and learn faster — turning prediction into a CRO multiplier, not a shortcut.

2. How do autonomous testing platforms affect governance & risk?

Autonomous systems boost speed but can break governance.

Without human checkpoints, they risk conflicting variants, poor QA, and unreliable data.

The fix: strong guardrails — governance as velocity protection.

3. What metrics should CEOs/CMOs track beyond CTR?

CTR shows activity, not value.

Modern programs track:

- Engaged Conversion Rate (ECR) – quality of conversions

- Learning Velocity (LV) – insights gained per test

- Experience Efficiency (EE) – conversion per friction removed

4. How does ethical personalization improve conversions and margins?

Because relevance built on trust scales longer than urgency.

Transparent, consent-driven experiences lower churn, raise AOV, and deepen loyalty — proving that empathy converts better than pressure.

5. How is AI reshaping experiment design and developer workflows?

AI is collapsing the gap between idea and launch.

Code-assist tools build variants in hours, not days.

Pattern-recognition models suggest hypotheses based on behavioral data.

And automated QA keeps quality intact at scale.

The result: CRO teams spend less time coding and more time learning.